How you protect the quality of your data analysis reliably, but yet flexibly

You need high-quality measurement data to carry out analyses and create meaningful reports. You also want to be flexible in generating reports – be it while the test is running or after its completion. Or you want the reports to be available at different locations without the need for unnecessary data transfers. And what is more, your reports must at all times be tamper-proof.

The challenges to test data analysis

Reports need to be protected against manipulation – that is encountered as statutory regulation more and more often. It means that your test management system is required to perform fully automated analyses without any user interaction. Of course, you still want to be flexible in triggering analyses. Just think of your where ad-hoc analyses help making adjustments even when the test is already running.

However, not only do you need flexible processes, you also need a suitable architecture. You probably want to perform evaluations on the server or client part of your system. Or you want to take the analysis to the very place where your data are located in order to avoid the trouble of data transfers. Or you need the possibility of interactive working with data.

These are the skills your test management system should have

In order to meet these challenges, the design of your processes and architectures should allow for flexible analysis ways and means, and should allow you to choose the methods that suit your demands best.

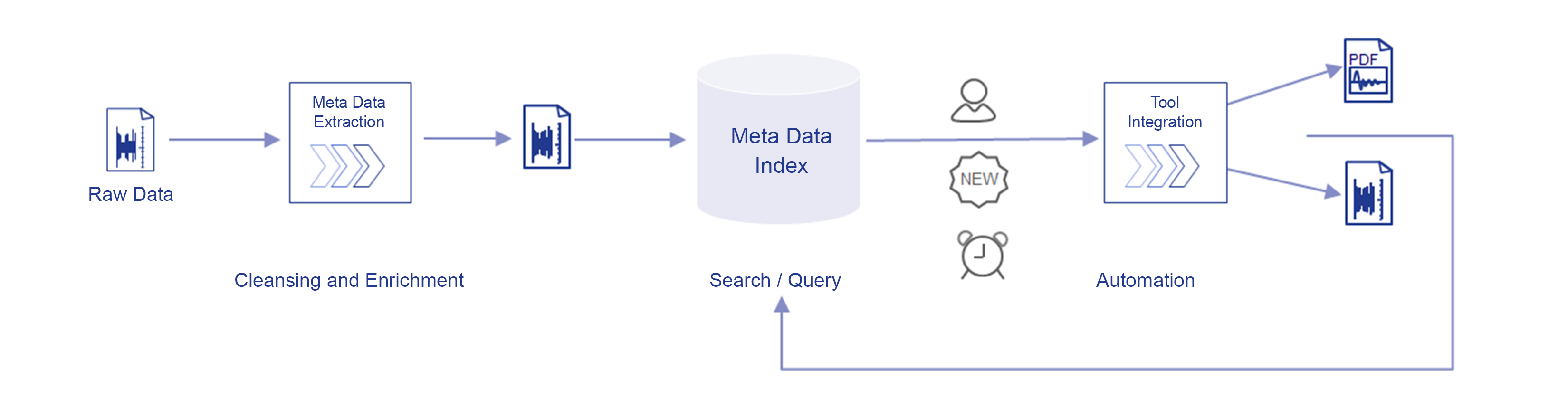

What you want to achieve is full traceability from the requirements through to the results. Meta information makes it easy to integrate the data automatically and without media breaks into the project context. The Meta Data Extractor is the component that determines the meta information from the raw data files received, calls the pre-processor for data standardization and integrates the resulting meta information into the Meta Data Index. This index contains the entire context of a test project. It accommodates information about your requirements, the test specification and details that are not contained in the raw data files and go far beyond the scale of raw data.

Typically, you want to have your analyses actuated by time control or by trigger events. A very common trigger, for example, is the receipt of a new file that contains measurement data or is created as soon as all tests of a project are completed. Such a content-related trigger is not possible unless you have an extended Meta Data Index, which among others contains information about the number of tests per test series. Of course, you still want to have the option to trigger ad-hoc analyses manually whenever it suits you in order to compare the data of different projects.

This way, you can flexibly generate intermediate or final reports. The system will also file your analysis results in the right project or resource context for you.

You probably use different analysis tools and have many ready-made scripts available for your specific requirements. Here too, the system should not set you any limits: you can easily integrate analysis systems with a web API into the system to deal with your requirements. In case you additionally use legacy tools that need a command line to be executed or if you use a large number of MATLAB, Python or other scripts, these tools can be integrated using specific frameworks.

Secure the quality of your analyses with an elaborate test data management system

As you are aware, automated analyses without user interaction are needed. But what is a safe way for you to shape manual intervention in the analysis procedure without any errors? Special development and release processes provide you with the certainty that all processes are documented and, hence, traceably recorded. They make sure your workflows are mapped efficiently and you avoid mistakes.

And, what is more, your workflows even become faster because processing-intensive data analyses are not executed on your workstations but can run automatically on servers. That takes pressure off so you can concentrate on your real engineering tasks.

Let us help you create the basis for your high-quality and reliable data analysis! We show you how our flexible and open test data management system HyperTest® Boost can optimize your data management.